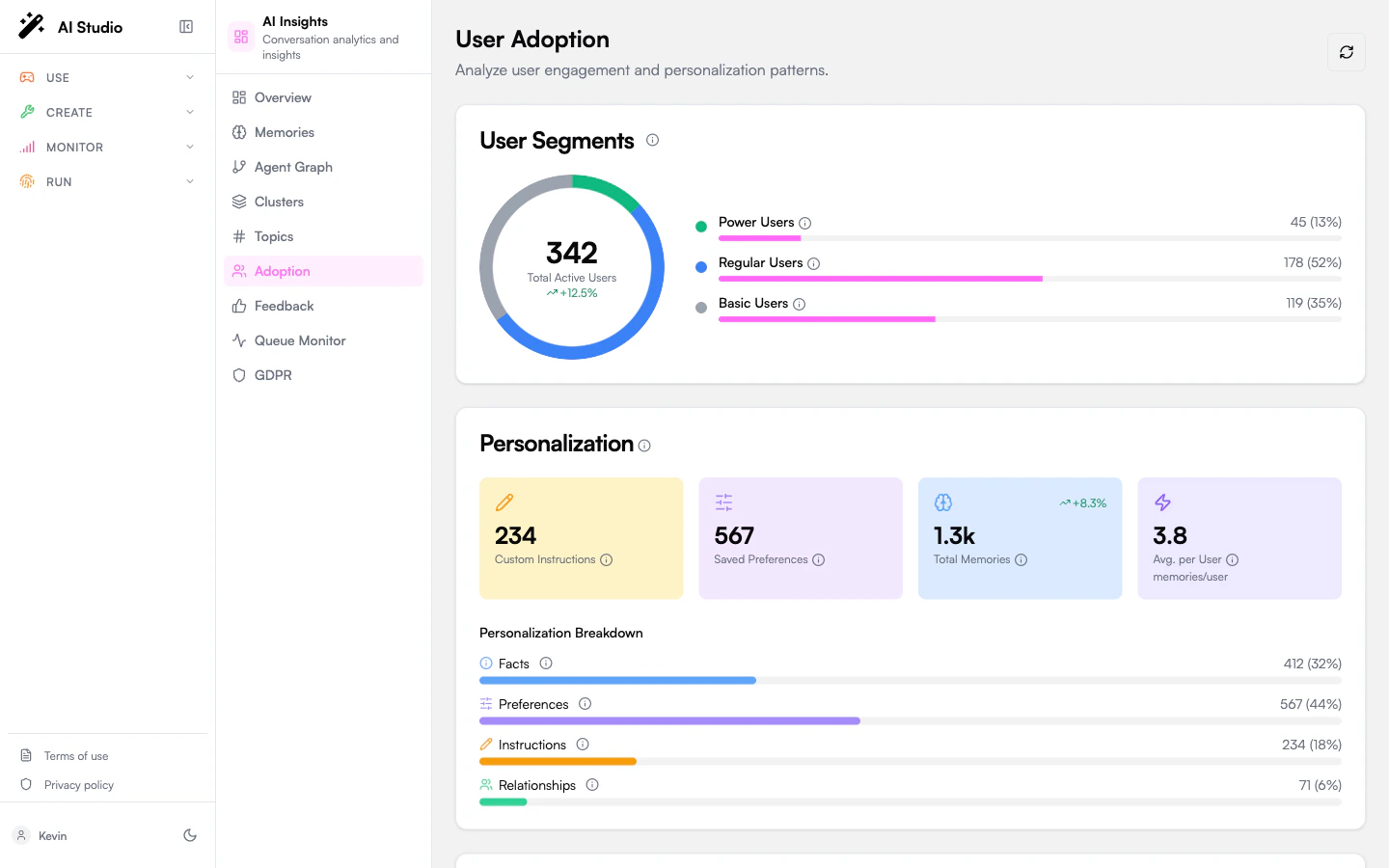

Adoption answers: who is using our agents, how deeply, and what should we do to grow each segment?Documentation Index

Fetch the complete documentation index at: https://docs.prisme.ai/llms.txt

Use this file to discover all available pages before exploring further.

User segments

A donut chart segments your active users into three buckets:| Segment | Description |

|---|---|

| Power Users | High-frequency, high-engagement users — typically returning daily and using more than one agent. |

| Regular Users | Users with sustained but moderate engagement. |

| Basic Users | Light users — a few interactions and rarely returning. |

Personalization

Four metrics that summarize how much the agents are learning about users:| Metric | What it measures |

|---|---|

| Instructions | Standing instructions users have given (“always reply in French”, “keep answers under 100 words”). |

| Preferences | Captured preferences (formats, channels, tone). |

| Memories | Total memories of any type. |

| Avg Per User | Memories divided by active users — a proxy for personalization depth. |

Quality

Four quality metrics rolled up across analyzed conversations:| Metric | What it measures |

|---|---|

| Quality Score | A 0–100 rollup that combines evaluation scores, resolution rate, and feedback into a single number. Color-coded — green ≥70, amber 40–69, red <40. |

| Resolution Rate | Percentage of analyzed conversations the LLM judged as resolved. |

| Feedback Like Rate | Share of feedback items that are likes (vs dislikes). |

| Sentiment distribution | A horizontal bar showing the positive / neutral / negative split across analyzed conversations. |

Common patterns

A short table of recurring usage patterns the analytics pipeline detected — for example “users asking the same workflow question across multiple agents”. Each row shows the pattern, the number of associated instructions, and how many distinct users it covers.Recommendations

Priority-ranked actions to grow adoption. Each recommendation has:- A priority badge (high / medium / low).

- A short action string.

- An optional target segment (e.g. “Basic Users”).

- An optional metric value (e.g. “+18% personalization”) showing the projected impact.

Aggregation freshness

Adoption metrics are computed from a daily aggregation. After a large analysis run or fresh memory capture, the page may show stale numbers until the next cycle. A “Waiting for aggregation” banner is shown when the most recent aggregation hasn’t completed yet. You can force a recomputation from the Organization dashboard using the Recalculate metrics menu action.Where to go next

Memories

The memory store the personalization metrics are built on.

Feedback

Likes, dislikes, and their categorized reasons.

Topics

What users are talking to your agents about.

Agent network

The agent fleet whose adoption you’re measuring.