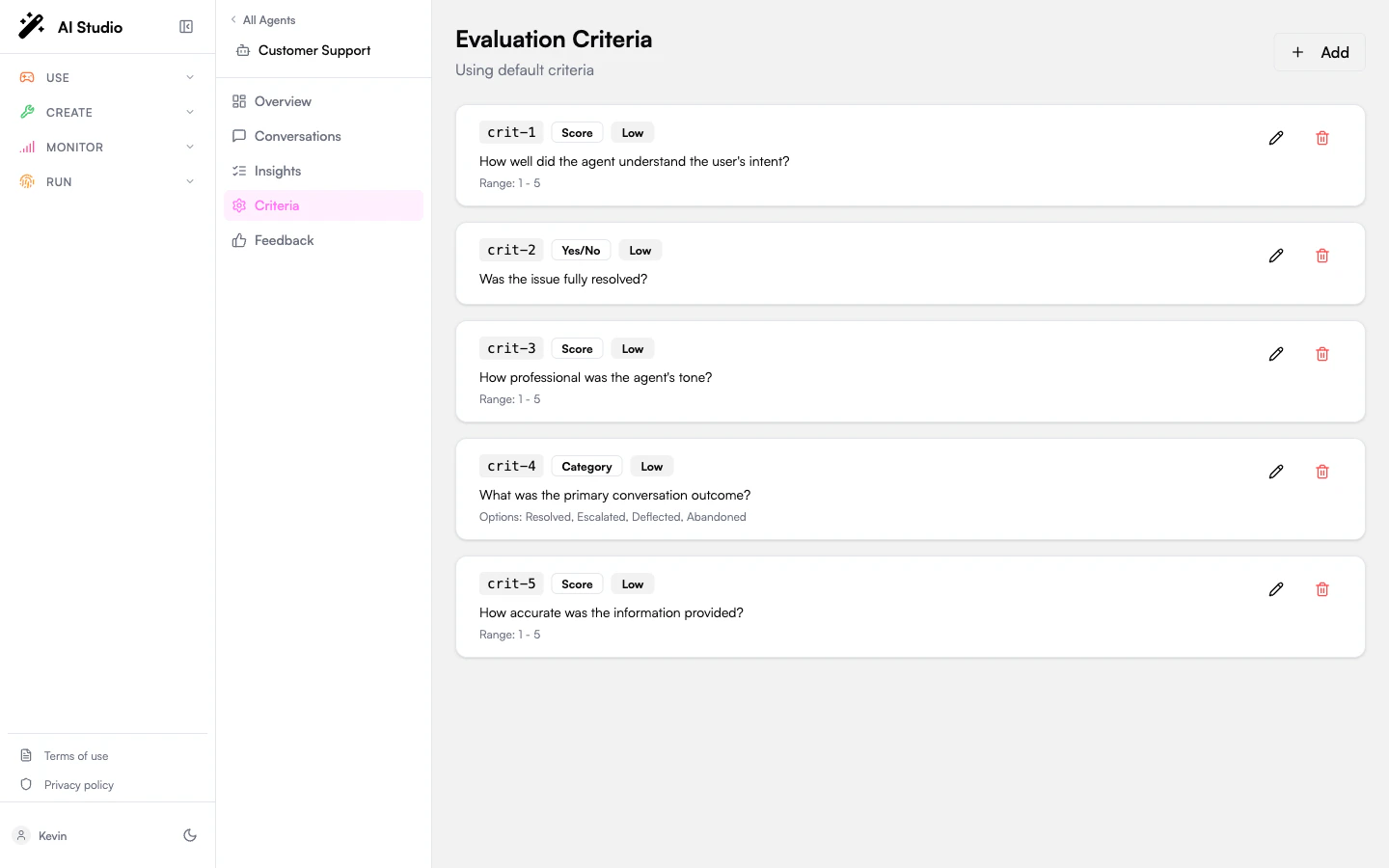

Evaluation criteria are the questions the LLM is asked when it analyzes a conversation. Each criterion has an ID, a question, a type, an optional value range or option list, and a weight that contributes to the overall evaluation score. You define criteria per agent, on the Criteria entry of the agent sidebar.Documentation Index

Fetch the complete documentation index at: https://docs.prisme.ai/llms.txt

Use this file to discover all available pages before exploring further.

The default criteria

Until you customize them, every analyzed conversation is judged against these four criteria:| ID | Type | Question | Weight |

|---|---|---|---|

resolution | Yes/No | Was the user’s issue resolved? | 0.30 |

clarity | Score (1–5) | Rate the clarity of the agent’s responses | 0.20 |

accuracy | Score (1–5) | Rate the accuracy of information provided | 0.30 |

sentiment | Category — positive / neutral / negative | What was the overall user sentiment? | 0.20 |

The three criterion types

- Yes/No

- Score

- Category

A boolean question. The LLM answers Yes or No with a brief reasoning string. Best for compliance gates and pass/fail checks.Example: “Did the agent ask for verification before sharing account details?”

Weights

Each criterion has a weight that determines its contribution to the overall evaluation score (0–100) shown across Insights.| Weight | Numeric value |

|---|---|

| Low | 0.25 |

| Medium | 0.50 |

| High | 0.75 |

| Critical | 1.00 |

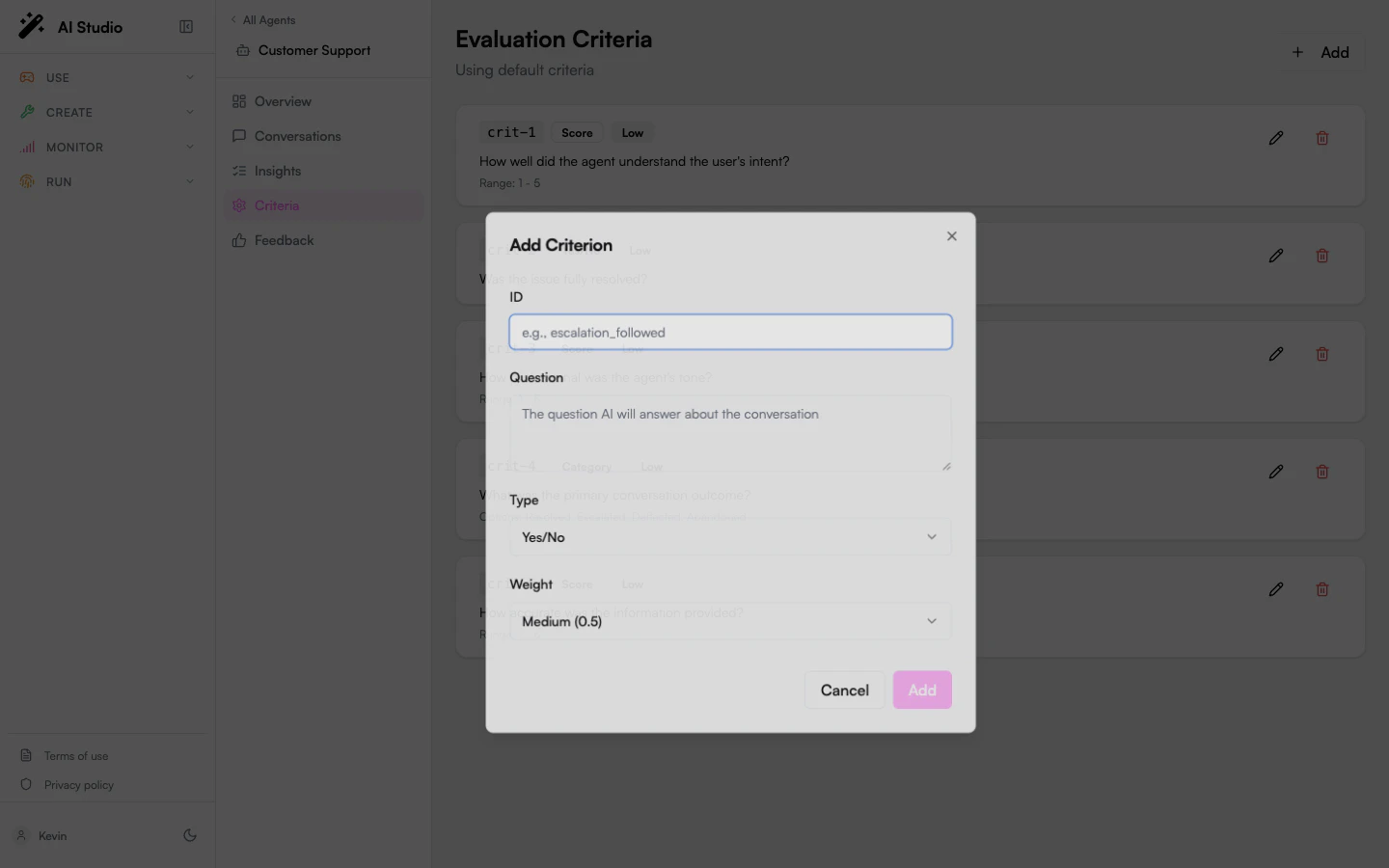

Add a criterion

Set the ID

A short snake_case identifier — for example

escalation_followed. The ID is what shows up under Evaluations on each insight detail.The ID is immutable once saved — you can’t edit it later.Write the question

Plain natural language describing what the LLM should evaluate. Be specific about what counts as a “yes”, a high score, or a particular category. The clearer the question, the more consistent the answers.

Pick the type

Yes/No, Score, or Category. For Score, set the Min and Max. For Category, list the options comma-separated.

Save the criterion

The new criterion appears in the list. The form shows Save Changes — your edits are local until you save.

Edit or delete a criterion

Each row has a pencil (edit) and trash (delete) icon. Edits open the same dialog with fields prefilled. The ID field is disabled — editing in place would invalidate historical insights, so the product treats it as immutable. Deleting a criterion removes it from future analysis passes. Existing insights keep their old criterion answers — they’re not retroactively recomputed. To re-evaluate against the new schema, see Re-analyze on the Conversations page.Best practices

- Keep questions outcome-oriented. “Did the agent confirm the user’s identity before sharing PII?” beats “Was the agent secure?”

- Use Yes/No for gates, Score for quality, Category for taxonomies. Mixing types lets the dashboard show pass-rates, distributions, and breakdowns side by side.

- Limit to ~5–8 criteria. More than that, and the LLM-as-judge tends to phone in shorter reasoning. Better to have a tight schema with high-quality answers.

- Use stable IDs. Once you have a few weeks of insights, renaming or deleting a criterion makes historical comparison harder.

- Preview with a single conversation. After defining new criteria, open a representative conversation and click Re-analyze to see how the LLM answers them before you let the batch pass apply them at scale.

How criteria show up downstream

- On the Insights detail panel — under Evaluations, each criterion ID, the answer, and the LLM’s reasoning string.

- On the Agent overview — folded into the Average Score and (for

resolution) the Resolution Rate. - On the Organization dashboard — folded into the Average Score rolled across the fleet.

- In CSV exports — the standard columns (score, resolved, sentiment, topics, summary). Custom criterion answers are visible in the UI but not currently part of the CSV — see Exporting data.

Where to go next

Read insights

See your criterion answers in the per-conversation detail.

Re-analyze conversations

Apply changed criteria to past conversations.