Documentation Index

Fetch the complete documentation index at: https://docs.prisme.ai/llms.txt

Use this file to discover all available pages before exploring further.

Configuring Knowledges

Knowledges is Prisme.ai’s product for agentic assistants powered by tools and retrieval-augmented generation (RAG). It enables teams to build agents that leverage internal knowledge across various formats, interact with APIs via tools, and collaborate with other agents through context sharing — enabling true multi-agent workflows with robust LLM support and enterprise-grade configuration options. This guide explains how to configure Knowledges in a self-hosted environment.Core Capabilities

- Configure multi-model support with failover and fine-tuned prompts

- Automate agent provisioning via Builder

- Enforce limits, security, and monitoring

- Enable builtin tools like summarization, search, code interpreter, web browsing

- Integrate with OpenSearch, Redis, or other vector stores

LLM Providers

OpenAI

OpenAI

Configure the

llm.openai.openai.models field :OpenAI Azure

OpenAI Azure

Configure the

Multiple resources can be added by appending additional entries to the

llm.openai.azure.resources.*.deployments field.Multiple resources can be added by appending additional entries to the

llm.openai.azure.resources array :Bedrock

Bedrock

Configure the

Multiple regions can be used by appending additional entries to the

llm.bedrock.*.models and llm.bedrock.*.region fields.Multiple regions can be used by appending additional entries to the

llm.bedrock array :Vertex

Vertex

Configure the While deploying a model through Vertex the name of the model should represent the full endpoint name as in the above example.The Note that the

llm.openai.vertex field :modelAliases feature comes really handy for this provider! In order to provide better readability to your users the above can be transformed into:service_account credentials should be ommitted if you deployed your platform on GCP and rely on IAM authentication. Also, the service_account value should either be :- JSON

- Stringified JSON (handy if you save it within a secret)

OpenAI-Compatible Providers

OpenAI-Compatible Providers

Configure the Optional Parameters:

llm.openailike field :- provider: The provider name used in analytics metrics and dashboards.

- options.excludeParameters: Allows exclusion of certain OpenAI generic parameters not supported by the given model.

- options.headers: Custom HTTP headers sent with every request to this provider. Values can be static strings or use

{correlationId}/{userId}as the full value to inject runtime context.

Global Configuration

Default models

Default models

Default agent parameters

Default agent parameters

Configure the following fields at the root of the configuration :

Rate Limits

Rate Limits

Rate limits can currently be applied at two stages in messages processing :

- When a message is received (requestsPerMinute limits for projects or users).

- After RAG stages and before the LLM call (tokensPerMinute limits for projects, users, models, or requestsPerMinute limits for models).

- limits.llm.users: Defines per-user message/token limits across all projects.

- limits.llm.projects: Defines default message/token limits per project. These limits can be overridden per project via the /admin page in Knowledges.

- limits.files_count: Specifies the maximum number of documents allowed in Knowledges projects. This number can also be overridden per project via the /admin page.

Model Aliases

Model Aliases

If you have multiple LLM Providers or regions with the same model names (for example gpt-4), you can use custom names:And you can map them to the name expected by the provider with the following:As a reminder, here is how modelsSpecifications could look like :

SSO Access

SSO Access

If you have your own SSO configured, you need to explicitly allow SSO authenticated users to access Knowledges pages :

- Open Knowledges workspace

- Open Settings > Advanced

- Manage roles

- Add your SSO provider technical name after

prismeai: {}at the very beginning :

Account Management

Account Management

By default, sharing an agent with an external email will automatically send an invitation mail to let the external user create an account and access the agent.You can disable this to enforce user control :Only existing users will be able to access shared agents.

Onboarding, Toasts & Statuses

Onboarding, Toasts & Statuses

Knowledges supports onboarding flows, multilingual statuses, and customizable notifications:

Models Configuration

Configure all available models with descriptions, rate limits, and failover:Customize descriptions

Customize descriptions

- All LLM models (excluding those with

type: embeddings) will automatically appear in the Agent Creator menu unless disabled at the agent level, with the configured titles and descriptions. displayNamespecifies the user-facing name of the model, replacing the technical or original model name to ensure a more intuitive and user-friendly experience.isHiddenFromEndUserspecifies that a model in the configuration will be hidden from end users. This feature allows administrators to temporarily disable a model or conceal it from the end-user interface without permanently removing it from the configuration.

Context & response tokens

Context & response tokens

maxContextspecifies the maximum token size of the context that can be passed to the model, applicable to both LLMs (full prompt size) and embedding models (maximum chunk size for vectorization).maxResponseTokensdefines the maximum completion size requested from the LLM, which can be overridden in individual agent settings.

Provider specific parameters

Provider specific parameters

additionalRequestBody.completionsandadditionalRequestBody.embeddingsspecify custom parameters which will be sent within all HTTP request bodies for the given model, used to enable AWS Guardrails in above example

Embeddings batch size

Embeddings batch size

By default, documents paragraphs are vectorized in batches of 96.

You can customize thisOr globally :

You can customize this

batchSize per model :Rate Limits

Rate Limits

When

modelsSpecifications.*.rateLimits.requestsPerMinute or modelsSpecifications.*.rateLimits.tokensPerMinute are defined, an error (customizable via toasts.i18n.*.rateLimit) is returned to any user attempting to exceed the configured limits. These limits are shared across all projects/users using the models.If these limits are reached and modelsSpecifications.*.failoverModel is specified, projects with failover.enabled activated (disabled by default) will automatically switch to the failover model.Notes:- tokensPerMinute limits apply to the entire prompt sent to the LLM, including the user question, system prompt, project prompt, and RAG context.

- Failover and tokensPerMinute limits also apply to intermediate queries during response construction (e.g., question suggestions, self-query, enhanced query, source filtering).

Environmental metrics

Environmental metrics

Environmental metric can be calculated when using models by setting the region where the model is hosted :energy consumed per token (in kWh) and PUE (Power Usage Effectiveness) profile :

Model display and capabilities

Model display and capabilities

The display section defines the model’s brand, name, icon, eco-score, and cost information:The capabilities section lists which modalities the model supports:Notes:

- Models from OpenAI, Gemini, and Bedrock currently support the file capability.

- Azure OpenAI does not yet provide this feature, although a community feature request is in progress.

- When

file.enabledis set totrueand the file size is within supported limits, the file is sent directly in Base64 to the LLM. - Upcoming releases will introduce support for passing a file ID instead of raw Base64 data, using the

storageProviderparameter (prisme,gcs, ors3). This will enable seamless handling of larger documents by referencing files stored in connected cloud storage rather than embedding their content directly. - the

file.maxSizeparameter is in Octet.

Prompt Caching (Bedrock only)

Prompt Caching (Bedrock only)

Some Bedrock models support static prompt caching.

You can enable this with

You can enable this with

promptCache.system option :Vector Store Configuration

To enable retrieval-based answers, configure a vector store:Tools and Capabilities

Knowledges enables advanced agents via tools.file_search

RAG tool for semantic search within indexed documents.

file_summary

Summarize entire files when explicitly requested.

documents_rag

Used to extract context from project knowledge collections.

web_search

Optional tool enabled via Serper API key:

code_interpreter

Python tool for data manipulation and document-based computation.

image_generation

Uses DALL-E or equivalant if enabled in LLM config.

Advanced Features

Builder Automation

Builder Automation

Knowledges projects and agents can be provisioned programmatically via Builder workflows.

Failover Models

Failover Models

Specify a backup model to switch to if the main one is overloaded:Make sure to enable failover in your workspace.

Token Management & Billing

Token Management & Billing

Assign costs per million tokens to track model usage:This can be used with usage-based dashboards in Insights.

Operations - ES/OS

Knowledges creates between 1 and 2 indices per agent (so 2 - 4 shards with default redundacy settings).1. Crawler Storage

The crawler stores the full text content of crawled documents. This includes the raw extracted text from web pages, PDFs, and other document types that have been processed.2. Vector Store

The vector store (when using ES/OS as your vector database) stores the embeddings for each paragraph/chunk extracted from documents. These vectors enable semantic search and RAG retrieval.Index Structure

These two types of data are stored in separate indexes:| Index | Purpose | Content | Index prefix |

|---|---|---|---|

| Crawler index | Full-text storage | Raw document text | <crawlerPrefix>-searchengine-webpages- |

| Vector index | Semantic search | Chunk embeddings | <vectorIndexPrefix>_<AIK_ID>_ |

- vectorIndexPrefix is the prefix configured in Knowledges configuration at

vectorStore.vectorIndexPrefixkey - AIK_ID is the Knowledges workspace id

- crawlerPrefix is the

ELASTIC_INDICES_PREFIXenvironment variable configured inprismeai-searchengineandprismeai-crawlerservices

Cleanup

Indexes are created automatically when the first document is added to an Knowledges project. As documents are deleted over time, these indexes may eventually contain zero documents—this can happen for both the crawler index and the vector store index. Important: Empty indexes still consume shards. To free up resources, it is recommended to delete empty indexes either:- Manually via Kibana / OpenSearch Dashboards

- Through an automated cleanup task

Next Steps

Chat

Create a ChatGPT-like agent within your organization — but secure, customizable, and connected to your tools and knowledge.

Monitoring & Logs

Monitor usage and LLM activity

Create an agent

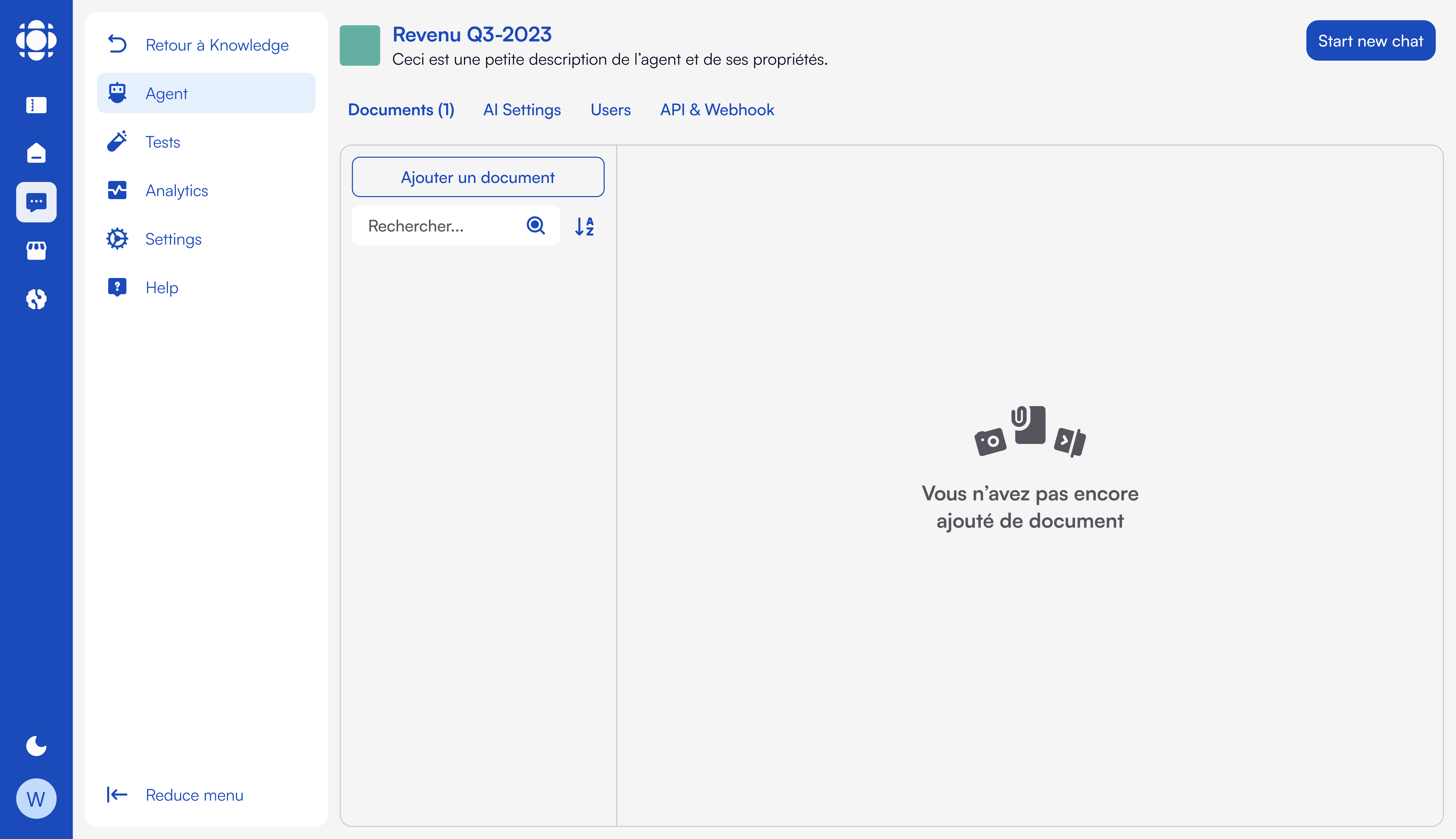

Learn more about agent creation